From 4 Hours to 15: What Autonomous Coding Means for Your GTM Org

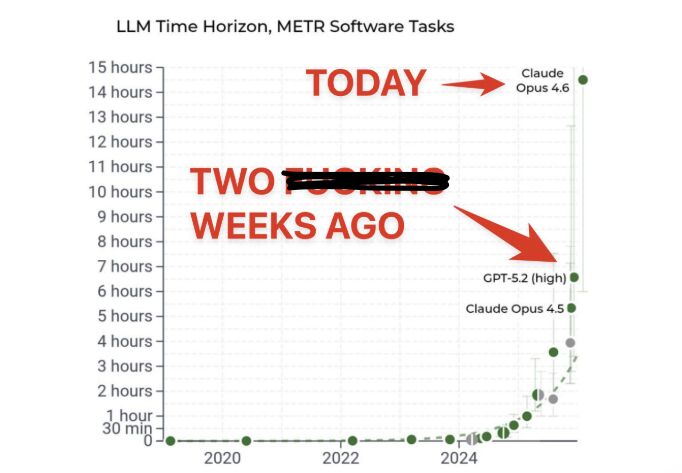

In December 2024, I posted about the scariest AI chart I had seen. The headline: AI can reliably complete 4-hour coding tasks. I thought that was a massive deal. I said we’d see GTM teams start to look more like product teams — shipping experiments, measuring results, iterating on what works.

Two weeks later, that number hit 7 hours.

Today, it’s 15.

The chart keeps going up, and the implications for GTM are not theoretical anymore.

The Chart That Started This

The benchmark tracks how long of a coding task AI models can reliably complete autonomously — no human hand-holding, no mid-task correction. Just describe what you want, walk away, and come back to working code.

When I first saw it in late 2024, o3 was at the bottom of the chart and the ceiling was 4 hours. That already felt significant. Four hours of autonomous coding meant you could describe a meaningful project before lunch and have it done by the time you got back.

But the pace of improvement is what caught my attention. This wasn’t a gradual climb. It was a steep curve, and every new model release pushed it further. The gap between “interesting demo” and “production-grade capability” was collapsing fast.

My prediction at the time: GTM teams are going to want their own agents and proprietary signals built for them. The most important metrics will shift from rep activity — “How many connection requests sent today?” — to “How quickly can we ship experiments and what is their impact on our pipeline?”

That prediction is playing out faster than I expected.

What 15 Hours of Autonomous Coding Actually Means

Fifteen hours is not a toy demo. Fifteen hours is a full build. It’s enough time to architect, implement, test, and deploy a real system. For GTM, that translates to specific projects that previously required a dedicated team or a multi-week engagement.

Lead scoring model built from your actual deal history

Take all your closed-won and closed-lost deals from the past two years. Feed in the firmographic data, the engagement data, the deal velocity, the objections logged in your CRM. Build a scoring model that tells your reps which accounts to prioritize based on patterns from deals you’ve actually won — not generic “intent data” from a vendor who has no context on your business.

This used to take a RevOps team weeks to scope, pull data, clean it, build the model, validate it, and deploy it. Now you describe what you want, point it at your CRM data, and review the output over coffee.

Messaging frameworks from 6 months of call recordings

Pull six months of Gong or Fathom transcripts. Have the system analyze which pain points come up most in deals that close, what language buyers actually use to describe their problems, which objections are most common, and which talk tracks correlate with progression. Turn all of that into a structured messaging framework — by persona, by segment, by deal stage.

Product marketing teams spend entire quarters on this kind of analysis. A GTM engineer with Claude Code can ship a first version in a day and iterate on it weekly.

Segment-specific ABM campaigns, end to end

Start with a tier 1 account list. Research each company individually — not generic firmographic enrichment, but real research. What are they hiring for? What did their CEO say on the last earnings call? What technology decisions have they made recently? What competitive pressures are they facing?

Then generate account-specific angles, customized outbound copy, ad copy, landing page variants, and AE talk tracks. All personalized to each account based on the research.

This is the kind of ABM that enterprise teams spend months on with entire teams dedicated to it. Fifteen hours of autonomous coding makes it accessible to a Series A startup with a two-person sales team.

Competitive intelligence — weekly, not quarterly

Most companies update their competitive intelligence decks once a quarter, if that. By the time the deck is done, half the information is stale.

With this level of autonomous coding, you can build a system that runs weekly and tracks: hiring patterns (what roles are competitors adding?), G2 review sentiment (what are their customers complaining about?), LinkedIn activity (what content themes are they pushing?), ad history (what messaging are they testing?), pricing page changes, content strategy shifts, job posting language that hints at product direction.

This isn’t a one-time report. It’s a running system that updates your competitive intelligence automatically and flags meaningful changes.

Sales enablement library built from deal data

Take your deal data, your call recordings, your email threads, your proposal history, and build a searchable library of what’s actually worked. Organized by persona, by objection, by deal stage, by industry vertical. Updated continuously as new deals close.

Not a static Google Drive folder that nobody opens. A living system that your reps can query in natural language: “Show me how we handled the ‘we already have an internal team’ objection in fintech deals over $50K.”

GTM Teams Become Product Teams

This is the thesis I laid out in December and it’s only gotten stronger.

Traditional GTM operates like a services organization. Ideas go through layers of approval. Campaigns take weeks to build. Experimentation is slow because every new test requires coordination across Marketing, RevOps, SDRs, and leadership.

Product teams operate differently. They ship fast, measure everything, kill what doesn’t work, and double down on what does. They run experiments in parallel. They instrument every step. They make decisions with data, not opinions.

When a single GTM engineer can build and deploy the systems I described above — lead scoring, messaging frameworks, ABM campaigns, competitive intelligence, enablement libraries — in days instead of quarters, your GTM org starts operating with product team velocity.

The metrics change too. Instead of measuring activity (“How many emails did we send?”), you measure velocity and impact (“How quickly can we ship experiments and what is their effect on pipeline?”). Instead of asking SDRs to do more, you ask what systems can make each action more effective.

The Most Important Hire on Your GTM Team

Every GTM team needs someone who can harness this. Not an engineer who understands sales in theory. Not a salesperson who took a Python course. Someone who lives at the intersection — who understands the GTM workflow deeply enough to know what to build and has enough technical comfort to direct Claude Code effectively.

This role barely existed two years ago. Now it’s the most impactful hire you can make.

The most AI-enabled person on your team cannot be someone prompting ChatGPT. That bar was fine in 2024. In 2026, with 15 hours of autonomous coding reliability and climbing, the bar is someone who can build systems — enrichment pipelines, scoring models, agent workflows, campaign automation — and iterate on them weekly.

The chart is only going to keep pointing up. The teams that hired this person six months ago are already compounding their advantage. The teams that wait another six months will be trying to catch up to a moving target.