Five AI Moments That Changed How I Think About GTM

I spent Christmas break in existential crisis.

Half excitement, half terror. Claude Code had just clicked for me, and it forced me to rethink everything about the work we do at Zevenue. Not in an abstract “AI will change everything” way. In a very concrete “the way I’ve built this business for four years may not be the right model anymore” way.

To process it, I mapped out every moment AI genuinely shattered my worldview. Not the hype moments. The ones where I sat with my laptop and realized the ground had shifted under me. There are five of them, and the most important pattern is that they’re happening more frequently.

1. ChatGPT Release — Late 2022

This is where most people’s AI timeline starts, including mine.

The first time I opened ChatGPT, I spent an hour asking it questions about cold email strategy. The answers were generic — but they were coherent. That was the part that mattered. You could have a conversation with a machine about a nuanced topic and get back responses that were structured, mostly accurate, and occasionally insightful.

For GTM specifically, the immediate use case was obvious: draft copy faster. And that’s what everyone did for the first six months. Use ChatGPT to write emails, landing pages, LinkedIn posts. The output was mediocre without heavy editing, but the speed was real.

The bigger shift was psychological. Once you interact with a model that can reason about your domain — even imperfectly — you start seeing your workflows differently. Every repetitive knowledge task starts looking like a candidate for automation. That mental shift was more valuable than any specific output ChatGPT produced in 2022.

Then GPT-4 arrived and the quality gap closed dramatically. The copy got better. The reasoning got sharper. The “heavy editing” phase shortened. But the fundamental interaction model was the same: you prompt, it responds, you evaluate. One exchange at a time. No memory. No compounding.

2. Claygent Drops — 2023

I remember when Clay was positioned more like Airtable — a flexible, general-purpose data tool that happened to be popular with GTM teams. The pivot to “the home of GTM data” hadn’t fully happened yet.

Then Claygent launched, and it changed how we do lead research to this day.

Before Claygent, enrichment meant querying a database. You’d pull data from Apollo or ZoomInfo and get back whatever structured fields they had on file. Job title, company size, industry, maybe a phone number. If you wanted something more specific — like what technology a company uses, or what their recent hiring patterns suggest about their priorities — you either did it manually or you didn’t do it at all.

Claygent was an AI research agent that could go find information on the open web. Not a web scraper pulling structured data from a known page. An agent that could reason about where to look, what to extract, and how to format it. You could ask “What is this company’s primary customer segment?” and it would read their website, their case studies, their job postings, and give you an answer.

This was exponentially better than a static database, a human researcher with a five-minute timer, or a traditional web scraper. It turned enrichment from a data lookup into a research task. And it made it possible to build highly specific prospect lists based on custom criteria that no database vendor would ever offer as a standard field.

For us at Zevenue, this was the moment outbound became a data engineering problem. The quality of your list — and specifically the signals you could attach to each prospect — became the primary differentiator between campaigns that worked and campaigns that didn’t.

3. Deep Research — 2024

Watching ChatGPT pull out analyst-level research on any topic at any time was genuinely jarring.

Before Deep Research, serious market analysis required either expensive analyst reports (Gartner, Forrester) or dedicating a team member to spend days pulling from multiple sources, synthesizing, and formatting. Competitive landscapes, market sizing, buyer persona analysis, industry trend reports — all of it was labor-intensive.

Deep Research collapsed that timeline to minutes. Not just surface-level summaries, either. It would pull from earnings calls, industry publications, academic research, government data, and synthesize it into something coherent with citations.

For GTM teams, this meant anyone could produce research that previously required dedicated product marketing or strategy resources. A founder could run a competitive analysis during a lunch break. An SDR leader could map a new vertical before deciding whether to build a list. A sales engineer could prep for a call with account-level research that would have taken hours to assemble manually.

We take this for granted now, which is itself telling. In under a year, “instant analyst-level research” went from mind-blowing to expected. That normalization speed is part of the pattern.

4. Replit Agent — Mid 2024

This was the first time I experienced hands-off building. Before Replit Agent, using AI to build software meant iterative prompting. You’d describe what you wanted, the model would generate code, you’d test it, find bugs, describe the bugs, get fixes, test again. It worked, but you could get stuck in debug hell for hours. The building was assisted, not autonomous.

Replit Agent introduced 3+ hours of agent runtime. You could describe a project, walk away, and come back to something functional. Not perfect, but functional enough to evaluate and iterate on — which is a fundamentally different workflow than line-by-line debugging with a chatbot.

For Zevenue, this was when we started building internal tools. Not just scripts and automations, but actual applications. Dashboard prototypes, data processing pipelines, internal admin tools. Things that would have required hiring a developer or using no-code tools with all their limitations.

The shift wasn’t just capability. It was ambition. When building software takes months and requires specialized skills, you only build what’s absolutely necessary. When building takes hours and you can describe what you want in plain English, you start building things you wouldn’t have even considered before. Internal tools for edge cases. Custom solutions for specific client problems. Prototypes to test ideas before committing resources.

5. Claude Code — Late 2024 / Early 2025

This is the one that sent me into the Christmas break spiral.

The logic is simple and it hit me all at once:

-

Code is the best way to solve most knowledge work problems. Not all of them. But the majority of tasks that involve processing information, applying logic, and producing output are better done in code than manually.

-

Coding is basically solved. Not “AI writes perfect code every time.” But AI can now reliably architect, implement, test, and debug real software systems. The autonomous coding benchmarks keep climbing — 4 hours, then 7, then 15 — and the trajectory is clear.

If both of those things are true, then agents can do nearly any form of knowledge work. Better and faster than humans. That conclusion is what kept me up at night.

For Zevenue specifically, Claude Code meant three things. First, shipping product — building tools and systems that would have been impossible without a development team. Second, Claude Code as the daily driver — not a tool you open for specific tasks, but the default interface for all GTM work. Third, building better solutions for nearly everything we do — because every process, every workflow, every deliverable is now a candidate for a code-driven approach.

The persistent context is what separates Claude Code from everything that came before it. ChatGPT conversations are ephemeral. Claygent runs are isolated. Deep Research reports are standalone documents. Claude Code sessions compound. Every build, every experiment, every piece of context you add to the project makes the next session smarter. You’re not just using a tool. You’re building a system that gets better over time.

The Pattern That Matters

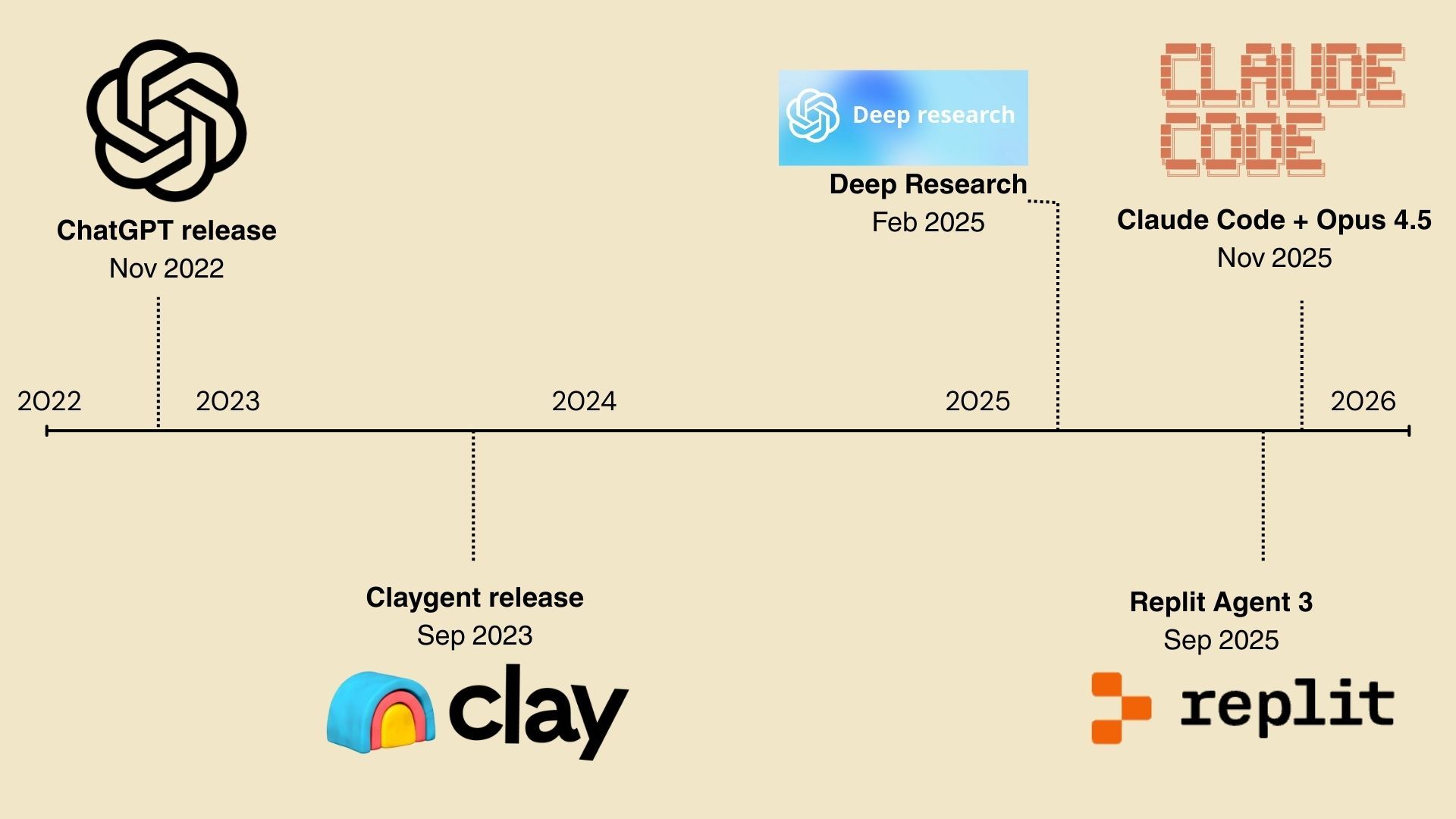

Look at the timeline again:

- ChatGPT — late 2022

- Claygent — 2023

- Deep Research — 2024

- Replit Agent — mid 2024

- Claude Code — late 2024

The gaps between these moments are shrinking. Two years between the first and second. About a year for the third. Months between the fourth and fifth. The acceleration is real and it shows no sign of slowing.

Each moment also represents a step change in autonomy. ChatGPT required you to drive every interaction. Claygent could research autonomously within a narrow scope. Deep Research could synthesize across sources without guidance. Replit Agent could build for hours without intervention. Claude Code can build, iterate, test, and deploy across sessions with compounding context.

I have no clue what number 6 will be. But based on the pattern, it’s coming sooner than I expect, and it will make number 5 look as quaint as number 1 does now.

The teams that are building systems on top of these capabilities today will be ready for number 6. The teams that are still debating whether AI is relevant to GTM will be scrambling to catch up to capabilities that have already moved on.